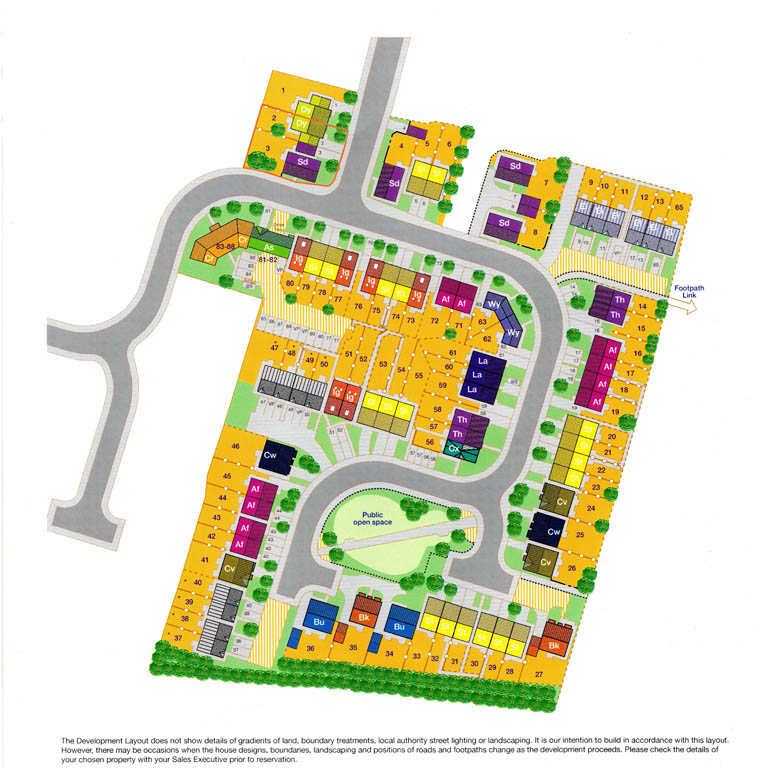

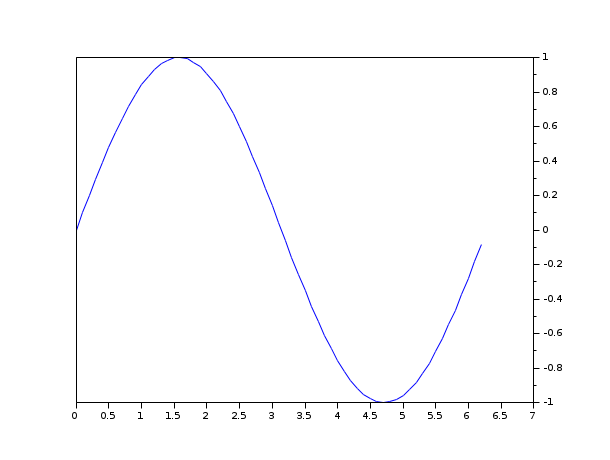

Svm.model <- svm(my.data$x1, my.data$x2, type="eps-regression") The span of two vectors in forms a plane. Here, the column space of matrix is two 3-dimension vectors, and. We can extend projections to and still visualize the projection as projecting a vector onto a plane. The hyperplane is the decision-boundary deciding how new observations are classified. # we can also predict one attribute based on another Projections Onto a Hyperplane Applied Data Analysis and Tools. Create support vector classifier svc LinearSVC(C1.0) Train model model svc.fit(Xstd, y) Plot Decision Boundary Hyperplane In this visualization, all observations of class 0 are black and observations of class 1 are light gray. An eg: # must transform type from factor to numeric, in order to perform regression Which is again the subject of an optimization procedure that finds the optimal \(y\).įunction svd() uses option type="eps-regression". Plot hyperplan code#All of my code works except for the 3D hyperplane which is supposed to be there but isn't. x1s 0\) (or zero otherwise).Īnd being \(S\) the indexes of the support vectors: \ I already made a working circular graph with datapoints and have even managed to add a z-axis and make it 3D to better classify the datapoints linearly with a 3D hyperplane. Total running time of the script: ( 0 minutes 0.Consider a binary classification, where input vectors \(x_i\) (the input space) and labels (aka, targets, classes) \(y_i = \pm 1\). Plotting an SVM separator (hyperplane) Hi, I am learning machine learning, and im trying to plot an SVM separator, The separator equation is w’x b0 When x is second order, i assumed that x1x and x2y so i can get a line y- (w1x b)/w2, then xlinspace (.) plot (x,y) to get a linear sperator. collections ],, loc = "upper right", ) plt. For example, if a space is 3-dimensional then its. from_estimator ( wclf, X, plot_method = "contour", colors = "r", levels =, alpha = 0.5, linestyles =, ax = ax, ) plt. In geometry, a hyperplane is a subspace whose dimension is one less than that of its ambient space. from_estimator ( clf, X, plot_method = "contour", colors = "k", levels =, alpha = 0.5, linestyles =, ax = ax, ) # plot decision boundary and margins for weighted classes wdisp = DecisionBoundaryDisplay. Visualization of electric fields: E-field set, vector field, Vector plot. The lines you see on the plot above are not the hyperplane wTx. Paired, edgecolors = "k" ) # plot the decision functions for both classifiers ax = plt. The newly developed hyperthermia treatment planning system HyperPlan. Lets discuss how the weights w relate to the slope of the decision boundary. Specifically, any observation above the line will by classified as class 0 while any observation below the line will be.

SVC ( kernel = "linear", class_weight = ) wclf. For example, here we are using two features, we can plot the decision boundary in 2D. In this visualization, all observations of class 0 are black and observations of class 1 are light gray. fit ( X, y ) # fit the model and get the separating hyperplane using weighted classes wclf = svm.

Import matplotlib.pyplot as plt from sklearn import svm from sklearn.datasets import make_blobs from sklearn.inspection import DecisionBoundaryDisplay # we create two clusters of random points n_samples_1 = 1000 n_samples_2 = 100 centers =, ] clusters_std = X, y = make_blobs ( n_samples =, centers = centers, cluster_std = clusters_std, random_state = 0, shuffle = False, ) # fit the model and get the separating hyperplane clf = svm.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed